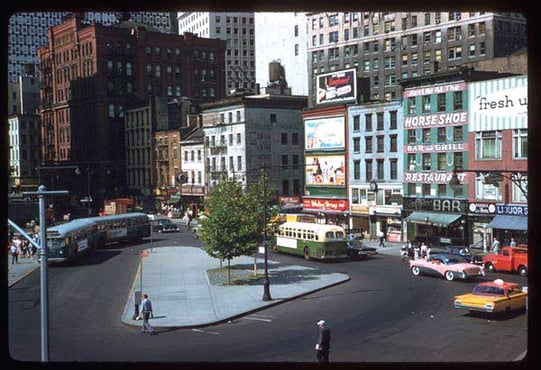

It is 1967. Ten men in suits gather around a sturdy wooden table many stories above New York City's Madison Avenue. They loudly debate the new campaign the agency is due to present to their client.

The men speak passionately about which of the creatives they believe would resonate most with the members of the general public walking on the street below. An hour and a half of circular debate ensues, filled with opinions and attacks, but nothing concrete to back up their claims. Eventually, the man at the head of the table has had enough. With a calm voice, he states his opinion one more time. Ending the debate, he orders the team to move forward with his wishes. Once again, the HiPPO (highest paid person’s opinion) in the room has won the argument.

Eventually, the man at the head of the table has had enough. With a calm voice, he states his opinion one more time. Ending the debate, he orders the team to move forward with his wishes. Once again, the HiPPO (highest paid person’s opinion) in the room has won the argument.

This isn’t the same story today. We have the tools to measure exactly which creatives resonate with each audience and which fall flat. However, many organizations still do not experiment with their digital creative.

Creating great marketing campaigns require a spark of innovation married with the reasoning of data. Nevertheless, many marketers still have objections to the science of data-driven ad production, testing, and optimization. Here are the myths that hold some advertising professionals back from dramatically increasing their return on advertising spend:

Myth 1: Born out of the Mad Men days, testing takes a lot of time and effort. However, using the RevJet Ad Creative Operating System allows experimentation to be fast, efficient, and perpetual. RevJet enables continuous testing automatically, with underperforming ads automatically paused to reduce wasted media spend. By minimizing the number of impressions required to declare an ad creative as inferior, you increase the value of every dollar you spend.

Myth 2: Advertisers have to provide ad tags to each publisher’s site they purchased inventory from. In the past, in order to traffic new ads, they had to individually contact each publisher to pull down the old ads and put up new ones. This made experimentation inefficient and time consuming. Instead, today each ad tag can only be submitted once. Now, new ads that are served are populated directly into the same tags, saving time and money and dramatically increasing efficiency. Myth 3: Some assume that testing minor changes isn’t worth the effort. Our customers have done hundreds of experiments proving that similar looking creatives can perform dramatically differently. Sometimes a subtle shift in color or moving the call to action button can significantly increase performance.

Myth 3: Some assume that testing minor changes isn’t worth the effort. Our customers have done hundreds of experiments proving that similar looking creatives can perform dramatically differently. Sometimes a subtle shift in color or moving the call to action button can significantly increase performance.

Myth 4: Experiments that don’t generate lift are a waste of time and resources. However, in reality, running any experiment provides information to make future decisions. Even if an experiment doesn’t end up with twice as many conversions, there are still many lessons to be learned and applied to future iterations.

Myth 5: The final myth is that continuous experimentation is not worth the effort due to the inevitability of diminishing returns. Creative can always improve, and the best way to know how is through testing. Any savvy marketer will tell you that they’re never finished experimenting because it’s always possible to further improve creatives.

Marketers who rigorously experiment challenge themselves to improve each day. By using the most modern technology available to their organization, they can dramatically outpace their peers. Those who don't are setting themselves back fifty years by arguing in abstract and theoretical terms about which ad will perform best.

Without data, it’s impossible to know exactly which ads will resonate with your intended audience. This means that in the end, the HiPPO will always prevail in organizations that don’t conduct data-driven experiments. Do you want your organization to make decisions driven by facts or loosely based in the anecdotal experiences in the style of Mad Men?